38 One Hot Encoding Vs Label Encoding

Label Encoding vs One Hot Encoding. Label encoding may look intuitive to us humans but machine learning algorithms can misinterpret it by assuming they have an ordinal ranking. In the below example, Apple has an encoding of 1 and Brocolli has encoding 3. But it does not mean Brocolli is higher than Apple however it does misleads the ML algorithm. But when I tried both label and one hot encoding on the dataset, one hot encoding gave better accuracy and precision. Can you kindly share your thoughts. The ACCURACY SCORE of various models on train and test are: The accuracy score of simple decision tree on label encoded data : TRAIN: 86.46% TEST: 79.42% The accuracy score of tuned decision.

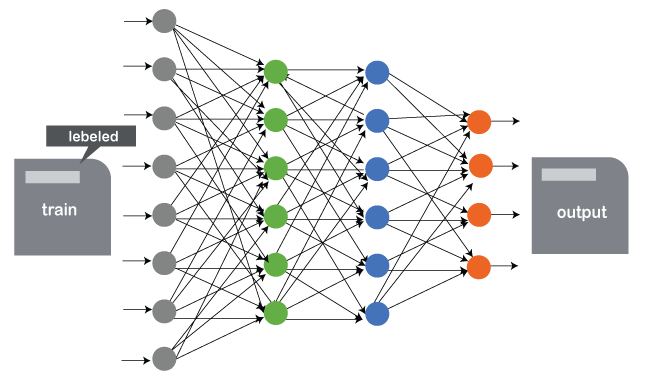

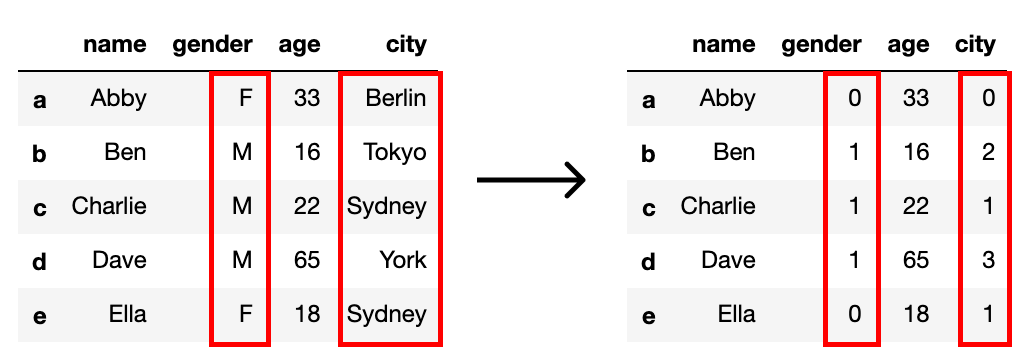

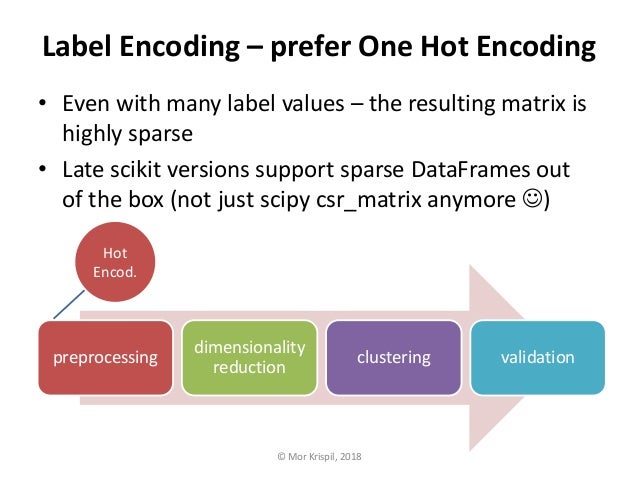

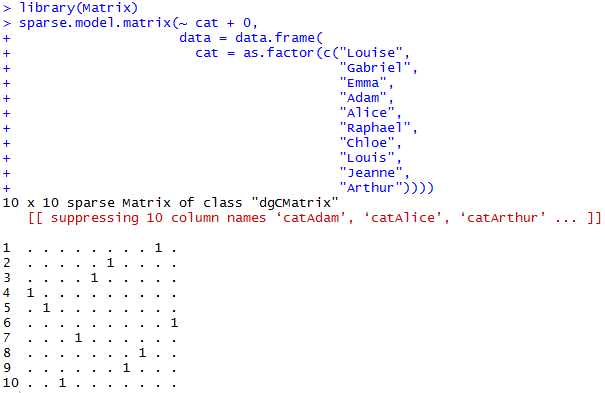

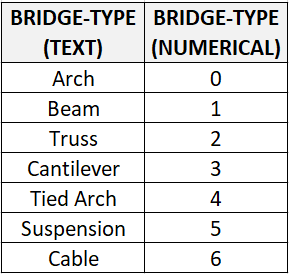

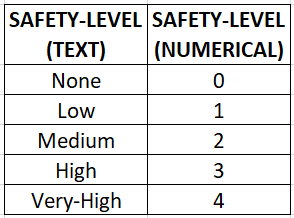

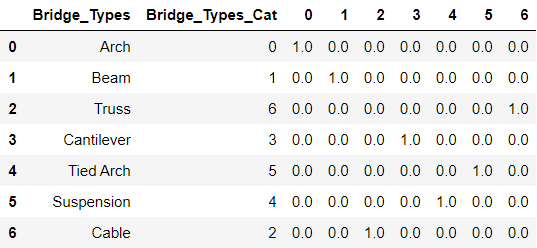

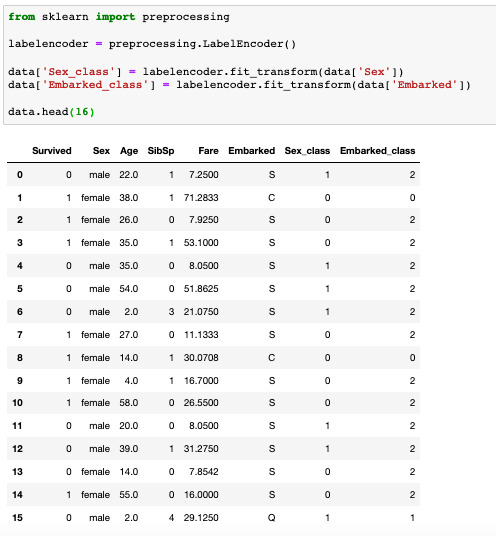

Hence, we will cover some popular encoding approaches: Label encoding; One-hot encoding; Ordinal Encoding; Label Encoding. In label encoding in Python, we replace the categorical value with a numeric value between 0 and the number of classes minus 1.

One hot encoding vs label encoding

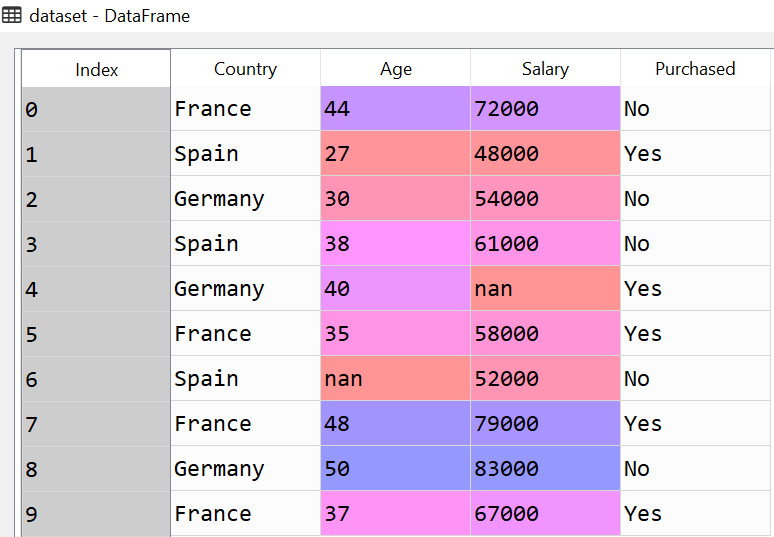

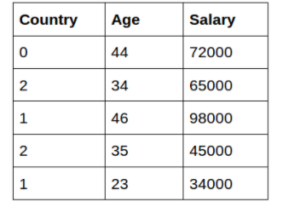

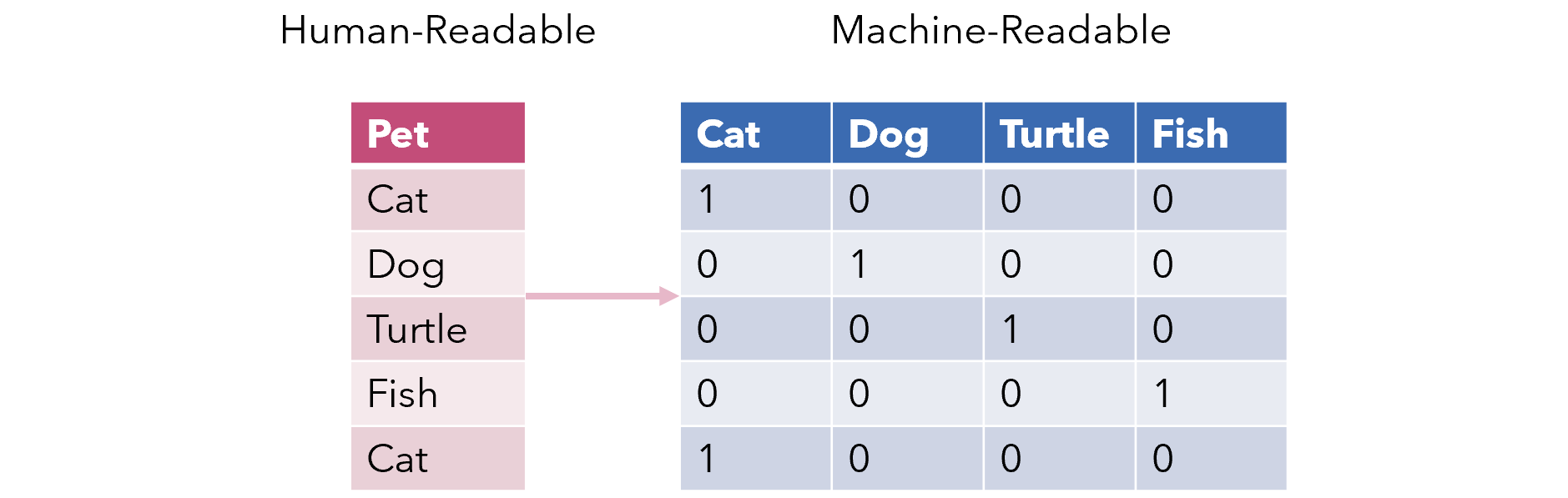

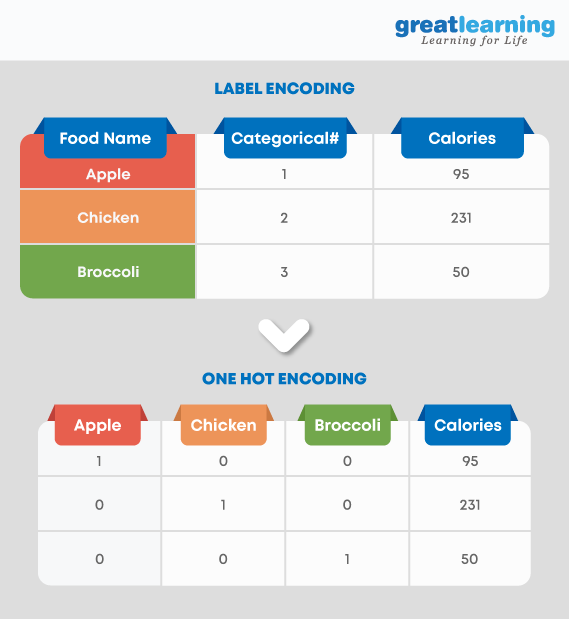

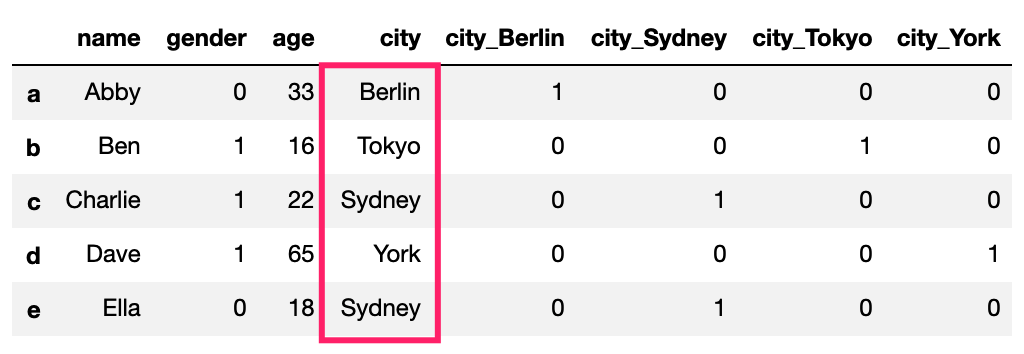

One hot encoding takes a section which has categorical data, which has an existing label encoded and then divides the section into numerous sections. The volumes are rebuilt by 1s and 0s, counting on which section has what value. The one-hot encoder does not approve 1-D arrays. The input should always be a 2-D array. Label Encoding and One Hot Encoding. 1 — Label Encoding. Label encoding is mostly suitable for ordinal data. Because we give numbers to each unique value in the data. If we use label encoding in nominal data, we give the model incorrect information about our data. The model algorithm can act as if there is a hierarchy among the data. What one hot encoding does is, it takes a column which has categorical data, which has been label encoded, and then splits the column into multiple columns. The numbers are replaced by 1s and 0s, depending on which column has what value. In our example, we'll get three new columns, one for each country — France, Germany, and Spain.

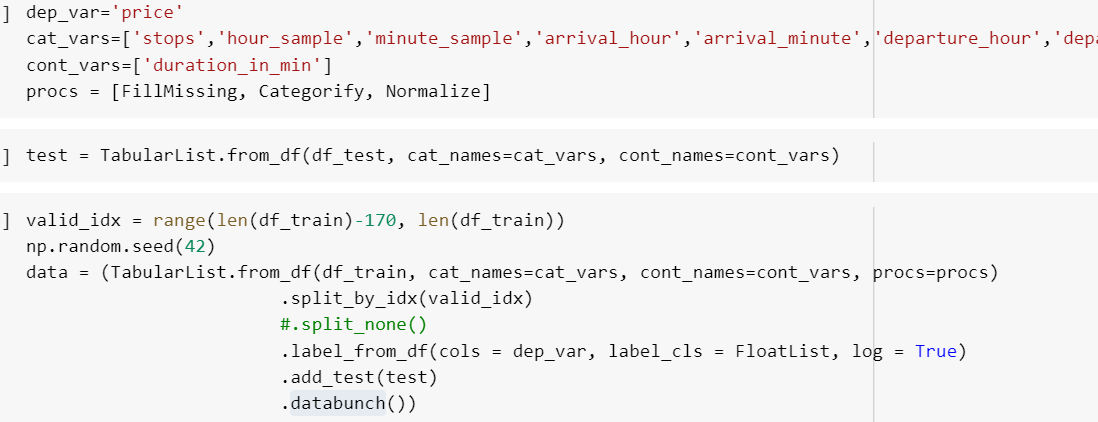

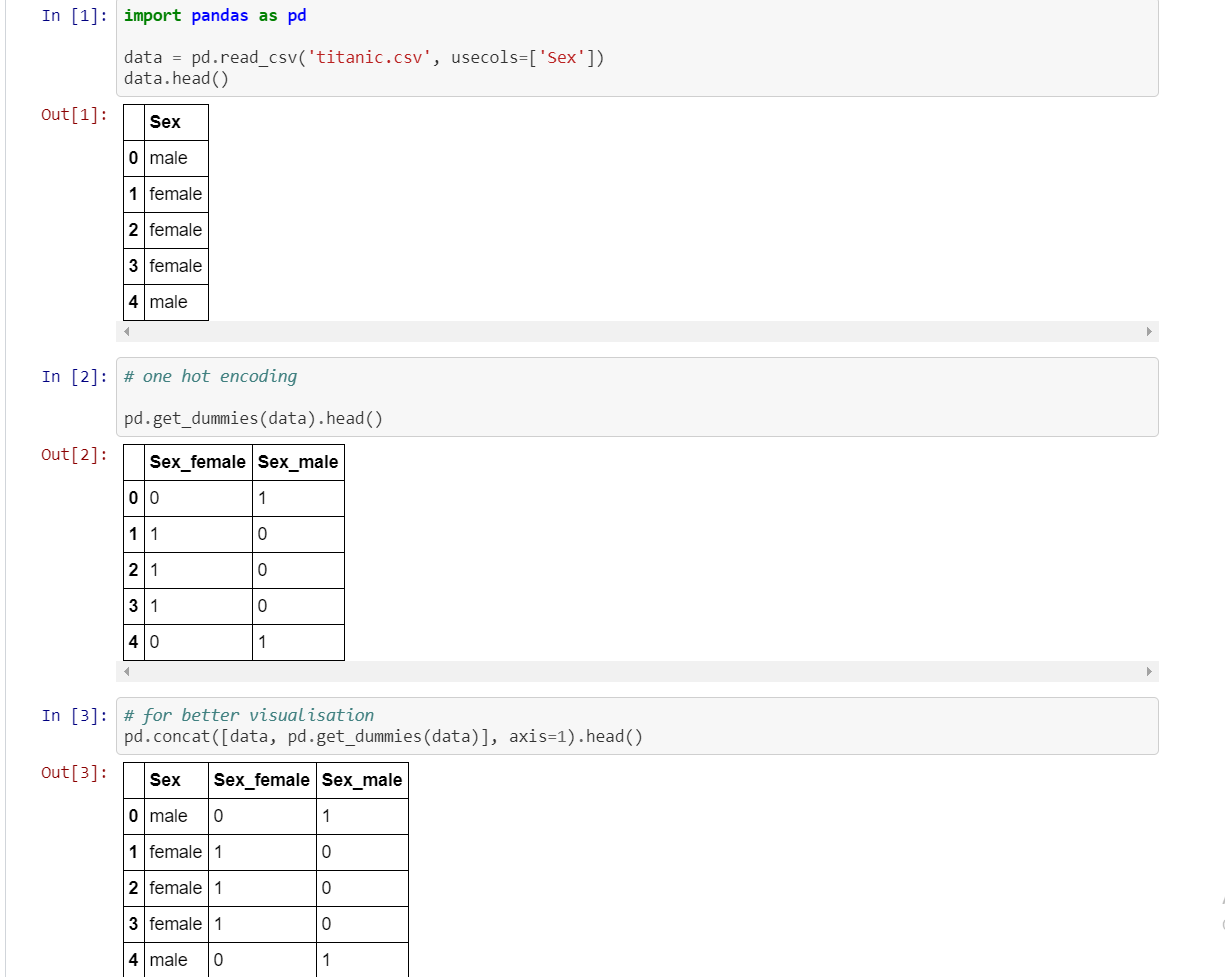

One hot encoding vs label encoding. Label encoding vs Dummy variable/one hot encoding - correctness? Ask Question Asked 2 years, 5 months ago. Active 1 year, 11 months ago. Viewed 2k times 2 1 $\begingroup$ I understand that when label encoding is used ,the numeric number can be interpreted to have an order and a model could assume a linear relationship. However shouldn't this be. Lets consider when to apply OHE and Label Encoding while building non tree based models. To apply Label encoding, the dependance between feature and target must be linear in order for Label Encoding to be utilised effectively. Similarly, in case the dependance is non-linear, you might want to use OHE for the same. In this tutorial, you will learn how to apply Label encoding & One-hot encoding using Scikit-learn and pandas. Encoding is a method to convert categorical va... One-hot Encoding; Ordinal Encoding; However, we will be covering Label Encoding only throughout this tutorial: Understanding Label Encoding. In Python Label Encoding, we need to replace the categorical value using a numerical value ranging between zero and the total number of classes minus one. For instance, if the value of the categorical.

Here we use One Hot Encoders for encoding because it creates a separate column for each category, there it defines whether the value of the category is mentioned for a particular entry or not by mentioning its value as 0 or 1. One-Hot Encoding on Gender Column. 2. Ordinal Encoding. Ordinal Encoding is specifically applied to only those features. The two most popular techniques are an Ordinal Encoding and a One-Hot Encoding. In this tutorial, you will discover how to use encoding schemes for categorical machine learning data. After completing this tutorial, you will know: Encoding is a required pre-processing step when working with categorical data for machine learning algorithms. One Hot Encoding is ideal for this situation. Considering that, I have done data pre-processing where few of the object data type which are categorical in nature (including Car Company), I have done one hot encoding. But Linear Regression did not work, R2 value was in negative. Same data with Decision Tree Regression gave r2 value: 0. One-Hot Encoding: To overcome the Disadvantage of Label Encoding as it considers some hierarchy in the columns which can be misleading to nominal features present in the data. we can use One-Hot Encoding strategy. One-hot encoding is processed in 2 steps: Splitting of categories to different columns. Put '0 for others and '1' as an.

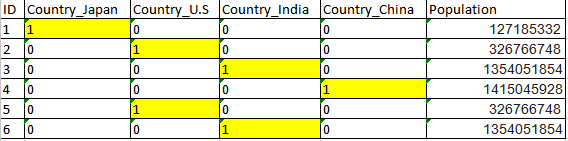

What one hot encoding does is, it takes a column which has categorical data, which has been label encoded and then splits the column into multiple columns. The numbers are replaced by 1s and 0s, depending on which column has what value. In our example, we'll get four new columns, one for each country — Japan, U.S, India, and China. In the above code, first, we have printed the sequence of labels. Then, we performed integer encoding and finally the one hot encoding. The OneHotEncoder class returns well-organized sparse encoding. But this is not efficient for the some application such as use with keras library. One Hot Encoding with Keras For instance, if we have a column of level in a dataset which includes beginners, intermediate and advanced. After applying the label encoder, it will be converted into 0,1 and 2 respectively. Register for Analytics Olympiad 2021>> OneHot Encoding. One-Hot Encoding is one of the most widely used encoding methods in ML models. One hot encoding takes a section which has categorical data, which has an existing label encoded and then divides the section into numerous sections. The volumes are rebuilt by 1s and 0s, counting on which section has what value. The one-hot encoder does not approve 1-D arrays. The input should always be a 2-D array.

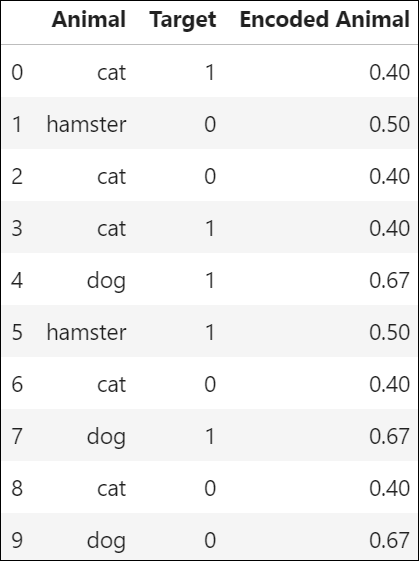

In this study, xgboost with target and label encoding methods had better performance on class 0, 1, and 2, and xgboost with one hot and entity embedding methods had better performance on class 0 and 4. Xgboost with one hot encoding and entity embedding can lead to similar model performance results.

In this video we will learn data preprocessing, #labelencoding and #onehotencoding Don't forget to Like, Share, and Subscribe:) We need you Support !=====...

Different encoding techniques that are present for preprocessing the data are One Hot Encoding and Label Encoding. Let us understand these two, one by one and try to learn the difference between the two: Contents show. Label Encoding. This is a data preprocessing technique where we try to convert the categorical column data type to numerical.

Performs an approximate one-hot encoding of dictionary items or strings. LabelBinarizer. Binarizes labels in a one-vs-all fashion. MultiLabelBinarizer. Transforms between iterable of iterables and a multilabel format, e.g. a (samples x classes) binary matrix indicating the presence of a class label.

One-Hot Encoder. Though label encoding is straight but it has the disadvantage that the numeric values can be misinterpreted by algorithms as having some sort of hierarchy/order in them. This ordering issue is addressed in another common alternative approach called 'One-Hot Encoding'. In this strategy, each category value is converted into.

This problem can be solved by One-Hot-Encoding as it effectively changes the dimensionality of the feature "Dependents" from one to four, thus every value in the feature "Dependents" will have their own weights. Updated equation for the decison would be f' (w) < K. where, f' (w) = W1*D_0 + W2*D_1 + W3*D_2 + W4*D_3.

In label encoding, we label the categorical values into numeric values by assigning each category to a number. Say, our categories are "pink" and "white" in label encoding we will be replacing 1 with pink and 0 with white. This will lead to a single numerically encoded column. Whereas in one-hot encoding, we end up with new columns.

One Hot Encoding: In this technique, we each of the categorical parameters, it will prepare separate columns for both Male and Female label. SO, whenever there is Male in Gender, it will 1 in Male column and 0 in Female column and vice-versa.

What one hot encoding does is, it takes a column which has categorical data, which has been label encoded, and then splits the column into multiple columns. The numbers are replaced by 1s and 0s, depending on which column has what value. In our example, we'll get three new columns, one for each country — France, Germany, and Spain.

Label Encoding and One Hot Encoding. 1 — Label Encoding. Label encoding is mostly suitable for ordinal data. Because we give numbers to each unique value in the data. If we use label encoding in nominal data, we give the model incorrect information about our data. The model algorithm can act as if there is a hierarchy among the data.

Integer Encoding. One-Hot Encoding. 1. Integer Encoding. As a first step, each unique category value is assigned an integer value. For example, " red " is 1, " green " is 2, and " blue " is 3. This is called a label encoding or an integer encoding and is easily reversible. For some variables, this may be enough.

If you would use one-hot-encoding you would represent the presence of 'dog' in a five-dimensional binary vector like [0,1,0,0,0]. If you would use multi-hot-encoding you would first label-encode your classes, thus having only a single number which represents the presence of a class (e.g. 1 for 'dog') and then convert the numerical labels to.

The number of categorical features is less so one-hot encoding can be effectively applied. We apply Label Encoding when: The categorical feature is ordinal (like Jr. kg, Sr. kg, Primary school, high school) The number of categories is quite large as one-hot encoding can lead to high memory consumption.

0 Response to "38 One Hot Encoding Vs Label Encoding"

Post a Comment